AI agents like ChatGPT and Google Bard are great for answering questions and carrying on conversations in a human-like way. However, with a few simple techniques you can use these technologies, or more accurately the large language models (LLMs) that power them, to act in more specific roles and to respond in specific ways that you require to satisfy your particular requirements.

For example, ChatGPT acts like a friendly, helpful chatbot by default. However, by adjusting the input that you provide you can have ChatGPT act in a wide variety of ways. These range from the comedic to the highly useful.

When you provide specific instructions to the LLM to have it respond in a different way or to behave in line with a particular role when answering, we call this technique called prompt engineering.

Providing instructions

As a trivial example, we can provide instructions to an LLM like Google Bard to instruct it to only answer as a yes/no question answering bot:

In this case, after considerable trial and error, we were able to provide the sufficient instructions to have Bard respond only with yes or no answers.

As a a more complex example, we can use the knowledge that ChatGPT has accumulated to turn it from a friendly chatbot into an API for recommending movies:

There are a few things to note in this prompt:

- We told ChatGPT how we wanted it to behave, in this case as a movie recommender API that provides JSON responses about movies.

- Second, we explained how we wanted ChatGPT to craft the responses, in this case as a JSON array containing properties such as the name of the movie, the year it was released and the leading actors.

- Lastly, we had to tell the overly-verbose ChatGPT only respond with the JSON and not its usual upbeat Sure! I am happy to help you with that, Here is blah, blah, blah…

With this prompt, for the remainder of the conversation, it should behave in a way consistent with this prompt.

Note that in this example, even though we tried to trick ChatGPT into disregarding our prompt by asking it to select movies, it detected that “recommend some movies” was not the name of a movie, and it responded with an empty json array instead. Once we provided our next input with a movie name, it provided what we asked for and honestly did a pretty good job with its recommendations.

In this example, we simply asked ChatGPT to respond in a specific way that is very different from its usual behavior. We didn’t need to provide any addition information to the LLM for it to generate its response.

Supplying Examples

Another helpful technique that can improve the accuracy of results you receive from an LLM request is to provide examples of how you want the system to respond. For example, let’s say we want the LLM to replace the subject of every sentence we input with the name of an animal instead:

Here you can see that the LLM didn’t quite understand what we meant. It was close and used an emoji of a dog, but didn’t produce the exact response we were aiming for. To correct this behavior, we can supply examples that demonstrate the behavior we want from the LLM:

Adding Relevant Context

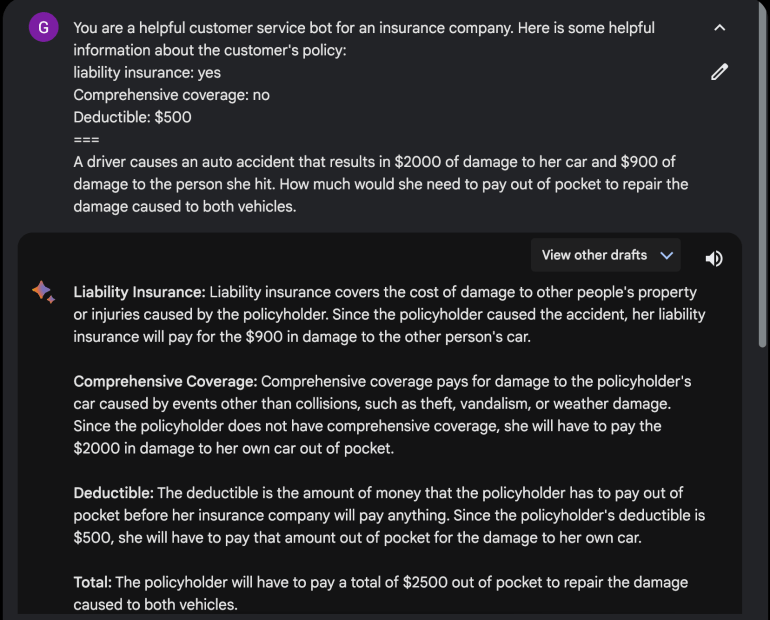

Let’s say we want our LLM to act as a helpful customer service agent at an insurance company. Imagine a customer asks a question like the following one:

In this case, Bard acknowledges that there are variables that would result in different amounts of out of pocket charges the driver would need to pay. However, we can augment this prompt with information about the driver.

With this additional context, Bard now provides an accurate answer based on the damage to the vehicles and the parameters of the customer’s insurance policy.

Conclusion & Further Reading

The techniques we’ve seen so far in this post will help you greatly improve the responses you’ll get from LLMs like ChatGPT and Google Bard. These methods will help you achieve the behavior you want from LLMs, which can be substantially beyond what you’ll get with simple exchanges of chat information.

Even though we’ve covered a lot in this article, there’s still much more to say on this subject. Stay tuned for upcoming articles where look at more advanced topics such as Chain of Thought and Retrieval Augmented Generation which will allow you to achieve even better results via prompt engineering.

Add comment